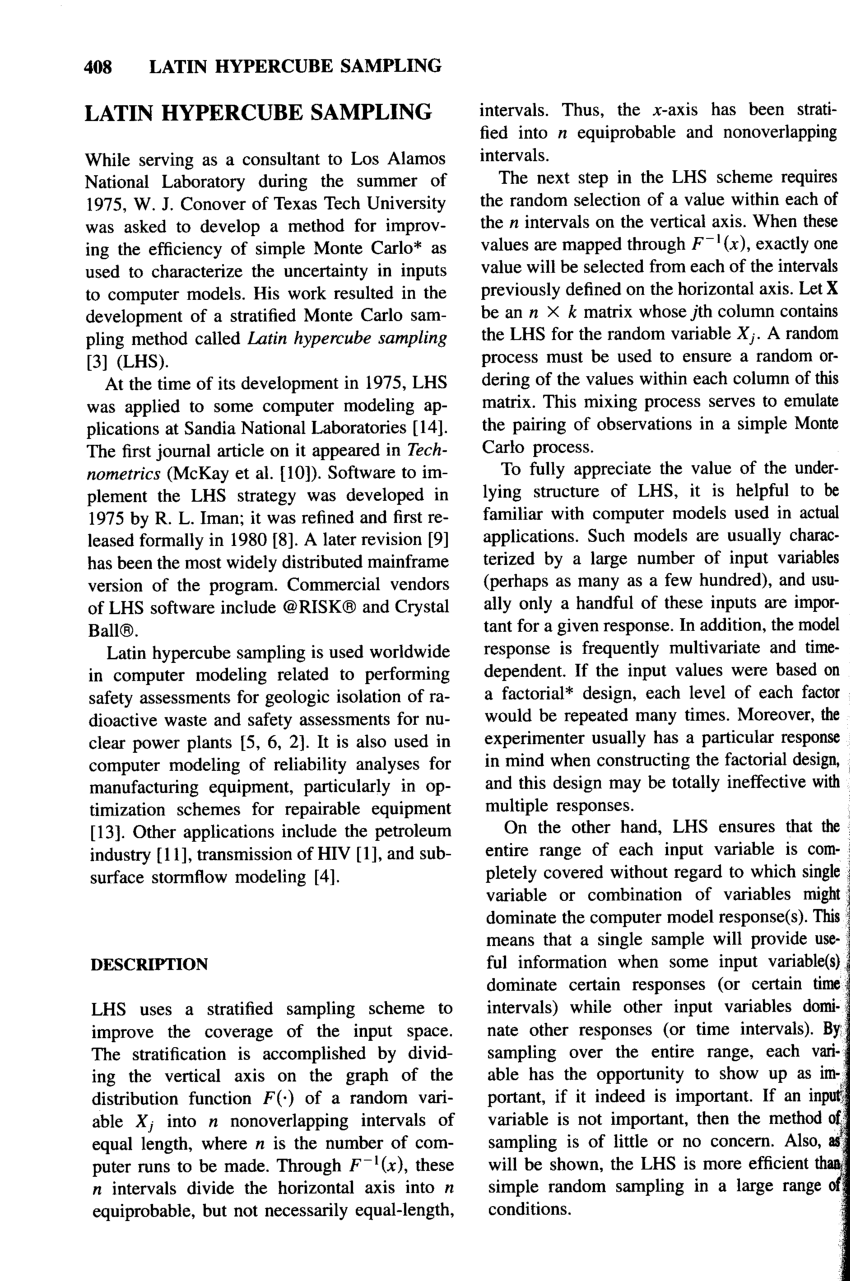

The iterations within the LHS sampling were run at an optimal level so the LHS model provided a good spatial representation of the environmental attributes within the watershed. The spatial resolution of covariates included within the work ranged from 5 - 30 m. The range of specific points created in LHS included 50 - 200 depending on the size of the watershed and more importantly the number of soil types found within. These additional covariates often include but are not limited to Topographic Wetness Index (TWI), Length-Slope (LS) Factor, and Slope which are continuous data. These include a required Digital Elevation Model (DEM) and subsequent covariate datasets produced as a result of a Digital Terrain Analysis performed on the DEM. Secondary soil and environmental attributes are critical inputs that are required in the development of sampling points by LHS. This allowed for specific sets of LHS points to be produced to fulfil the needs of various partners from multiple projects working in the Ontario and Prince Edward Island provinces of Canada. The Latin Hypercube Sampling (LHS) approach to assist with Digital Soil Mapping has been developed for some time now, however the purpose of this work was to complement LHS with use of multiple spatial resolutions of covariate datasets and variability in the range of sampling points produced. AAFC - Agriculture and Agr-Food Canada, Ottawa, Canada. Sampsa Hamalainen, Xiaoyuan Geng, and Juanxia, He. There is no upper bound in dimensions for which LHS is proven to be effective.Latin Hypercube Sampling (LHS) at variable resolutions for enhanced watershed scale Soil Sampling and Digital Soil Mapping. These conclusions are all irrespective of the number of dimensions in the sample. Owen 1997 says this is "not much worse than" simple random sampling. A LHS of size $n > 1$ has variance in the non-additive estimator less than or equal to a simple random sample of size $(n-1)$. See here from the accepted answer, and also Stein 1987 and Owen 1997.įor non-additive functions, the Latin hypercube sample may still provide benefit, but it is less certain to provide benefit in all cases. The conclusions in the literature are clear:įor estimating the variance in functions which are "additive" in the margins of the Latin hypercube, then the variance in the estimate of the function is always less than the equivalent sample size of simple random sample, regardless of the number of dimensions and regardless of sample size. If you read the chapter cited by the accepted answer here, they talk about effectiveness of variance reduction or efficiency being measured relative to some base algorithm like simple random sampling. The plots they showed were the confidence intervals for the mean of their cost function with increasing sample size for 1 dimension and 2 dimension. The original poster was looking for an amount of "variance reduction" in the Latin hypercube.

I interpret the literature cited in the accepted answer differently. Once you move outside the realm of additive functions, it's very hard to predict how much of an improvement you'll get. It also contains a number of references to the literature: some researchers have found that LHS substantially outperforms simple random sampling, whereas others have noted minimal improvements.

This latter article also suggests a rule of thumb that LHS is most effective when at most 3 inputs/dimensions contribute most of the variation in the output. I also note this blog post by Lonnie Chrisman which argues in favour of LHS as a default for sampling. Indeed, many researchers continue to use LHS regularly as a default sampling option. He seems to be considering the situation where it is trivial (by modern computing standards) to evaluate the output function at each sampled point in parameter space, so I don't think this article is a reason to avoid LHS. There's an interesting blog post by David Vose in which he explains why he doesn't implement LHS in his ModelRisk software. LHS is essentially never worse than simple random sampling, so you can always use LHS as a default sampling method and this decision won't cost you anything. In practice, this behaviour doesn't actually matter. I agree with the answer by R Carnell, there is no upper bound on the number of parameters/dimensions for which LHS is proven to be effective, though in many settings I've noticed that the relative benefits of LHS compared to simple random sampling tend to decrease as the number of dimensions increases.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed